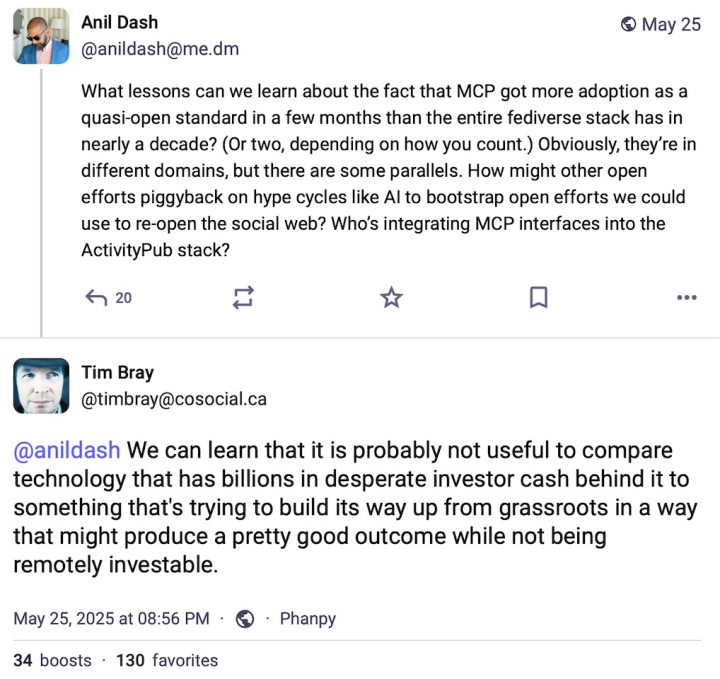

My smartest friends have bananas arguments about LLM coding.

A couple of months ago, I wrote:

[...] It may become impossible to launch a new programming language. No corpus of training data in the coding AI assistant; new developers don't want to use it because their assistant can't offer help; no critical mass of new users; language dies on the vine. --@zarfeblong, March 28

I was replying to a comment by Charlie Stross, who noted that LLMs are trained on existing data and therefore are biased against recognizing new phenomena. My point was that in tech, we look forward to learning about new inventions -- new phenomena by definition. Are AI coding tools going to roadblock that?

Already happening! Here's Kyle Hughes last week:

At work I’m developing a new iOS app on a small team alongside a small Android team doing the same. We are getting lapped to an unfathomable degree because of how productive they are with Kotlin, Compose, and Cursor. They are able to support all the way back to Android 10 (2019) with the latest features; we are targeting iOS 16 (2022) and have to make huge sacrifices (e.g Observable, parameter packs in generics on types). Swift 6 makes a mockery of LLMs. It is almost untenable.

[...] To be clear, I’m not part of the Anti Swift 6 brigade, nor aligned with the Swift Is Getting Too Complicated party. I can embed my intent into the code I write more than ever and I look forward to it becoming even more expressive.

I am just struck by the unfortunate timing with the rise of LLMs. There has never been a worse time in the history of computers to launch, and require, fundamental and sweeping changes to languages and frameworks. --@kyle, June 1 (thread)

That's not even a new language, it's just a new major version. Is C++26 going to run into the same problem?

Hat tip to John Gruber, who quotes more dev comments as we swing into WWDC week.

Speaking of WWDC, the new "liquid glass" UI is now announced. (Screenshots everywhere.) I like it, although I haven't installed the betas to play with it myself.

Joseph Humphrey has, and he notes that existing app icons are being glassified by default:

Kinda shocked to see these 3rd party app icons having been liquid-glassed already. Is this some kind of automatic filter, or did Apple & 3rd parties prep them in advance?? --@joethephish, June 10 (thread)

The icon auto-glassification uses non-obvious heuristics, and Joe's screenshots show some weird artifacts.

I was surprised too! For the iOS7 "flatten it all" UI transition, existing apps did not get the new look -- either in their icons or their internal buttons, etc -- until the developer recompiled with the new SDK. (And thus had a chance to redesign their icons for the new style.) As I wrote a couple of months ago:

[In 2012] Apple put in a lot of work to ensure that OS upgrades didn't break apps for users. Not even visually. (It goes without saying that Apple considers visual design part of an app's functionality.) The toolkit continued to support old APIs, and it also secretly retained the old UI style for every widget.

-- me, April 9

Are they really going to bag that policy for this fall? I guess they already sort of did. Last year's "tint mode" squashed existing icons to tinted monochrome whether they liked it or not. But that was a user option, and not a very popular one, I suspect.

This year's icon change feels like a bigger rug-pull for developers. And developers have raw nerves these days.

This is supposed to be a prediction post. I guess I'll predict that Apple rolls this back, leaving old (third-party) icons alone for the iOS26 full release. Maybe.

(I see Marco Arment is doing a day of "it's a beta, calm down and send feedback". Listen to him, he knows his stuff.)

But the big lurking announcement was iPadOS gaining windows, a menu bar, and a more (though not completely) file-oriented environment. A lot of people have been waiting years for those features. Craig Federighi presented the news with an understated but real wince of apology.

Personally, not my thing. I don't tend to use my iPad for productive work. And it's not for want of windows and a menu bar; it's for want of a keyboard and a terminal window. I have a very terminal-centric work life. My current Mac desktop has nine terminal windows, two of which are running Emacs.

(No, I don't want to carry around an external keyboard for my iPad. If I carry another big thing, it'll be the MacBook, and then the problem is solved.)

But -- look. For more than a decade, people have been predicting that Apple would kill MacOS and force Macs to run some form of iOS. They predicted it when Apple launched Gatekeeper, they predicted it when Apple brought SwiftUI apps to MacOS, they predicted it when Apple redesigned the Settings app.

I never bought it before. Watching this week's keynote, I buy it. Now there is room for i(Pad)OS to replace MacOS.

Changing or locking down MacOS is a weak signal because people use MacOS. You can only do so much to it. Apple has been tightening the bolts on Gatekeeper at regular intervals, but you can still run unsigned apps on a Mac. The hoops still exist. You can install Linux packages with Homebrew.

But adding features to iPad is a different play! That's pushing the iPad UI in a direction where it could plausibly take over the desktop-OS role. And this direction isn't new, it's a well-established thing. The iPad has been acquiring keyboard/mouse features for years now.

So is Apple planning to eliminate MacOS entirely, and ship Macs with (more or less) iPadOS installed? Maybe! This is all finger-in-the-wind. I doubt it's happening soon. It may never happen. It could be that Apple wants iPad to stand on its own as a serious mobile productivity platform, as good as the Mac but separate from it.

But Apple thinks in terms of company strategy, not separate siloed platforms. And, as many people have pointed out, supporting two similar-but-separate OSes is a terrible business case. Surely Apple has better uses for that redundant budget line.

Abstractly, they could unify the two OSes rather than killing one of them. But, in practice, they would kill MacOS. Look at yesterday's announcements. iPad gets the new features; Mac gets nothing. (Except the universal shiny glass layer.) The writing is not on the wall but the wind is blowing, and we can see which way.

Say this happens, in 2028 or whenever. (If Apple still exists, if I haven't died in the food riots, etc etc.) Can my terminal-centric lifestyle make its way to an iPad-like world?

...Well, that depends on whether they add a terminal app, doesn't it? Fundamentally I don't care about MacOS as a brand. I just want to set up my home directory and my .emacs file and install Python and git and npm and all the other stuff that my habits have accumulated. You have no idea how many little Python scripts are involved in everyday tasks like, you know, writing this blog post.

(Okay, you do know that because my blogging tool is up on Github. The answer is four. Four vonderful Python scripts, ah ah ah!)

If I can't do all that in MacOS 28/29/whichever, it'll be time to pick a Linux distro. Not looking forward to that, honestly. (I fly Linux servers all the time, but the last time I used a Linux desktop environment it was GNOME 1.0? I think?)

Other notes from WWDC. (Not really predictions, sorry, I am failing my post title.)

-

Tim Cook looks tired. I don't mean that in a Harriet Jones way! I assume he's run himself ragged trying to manage political crap. Craig Federighi is still having fun but I felt like he was over-playing it a lot of the time. Doesn't feel like a happy company. Eh, what do I know, I'm trying to read tea leaves from a scripted video.

-

I said "Mac gets nothing" but that's unfair. The Spotlight update with integrated actions and shortcuts looks extremely sexy. Yes, this is about getting third-party devs to support App Intents so that Siri/AI can hook into them. But it will also be great for Automator and other non-AI scripting tools.

-

WWDC is a software event; Apple never talks about new hardware there. I know it. You know it. But it sure was weird to have a whole VisionOS segment pushing new features when the rev1 Vision Pro is at a dead standstill. My sense is that the whole ecosystem is on hold waiting for a consumer-viable rev2 model.

-

I think the consumer-viable rev2 model is coming this fall. There, that's a prediction. Worth what you paid for it.

-

I'm enjoying the Murderbot show but damn if Gurathin isn't a low-key Vision Pro ad. He's got the offhand tap-fingers gesture right there.

-

I'm excited about the liquid glass UI. I want to play with it. Fun is fun, dammit.

-

I hate redesigning app icons for a new UI. Oh, well, I'll manage. (EDIT-ADD: Turns out Apple is pushing a new single-source process which generates all icon sizes and modes. Okay! Good news there.)